You’ve heard of OpenAI and Nvidia, however are you aware who else is concerned within the AI wave and the way all of them match collectively?

A number of months in the past, I visited the MoMA in NYC and noticed the work Anatomy of an AI System by Kate Crawford and Vladan Joler. The work examines the Amazon Alexa provide chain from uncooked useful resource extraction to plot disposal. This made me to consider all the pieces that goes into producing at the moment’s generative AI (GenAI) powered purposes. By digging into this query, I got here to grasp the various layers of bodily and digital engineering that GenAI purposes are constructed upon.

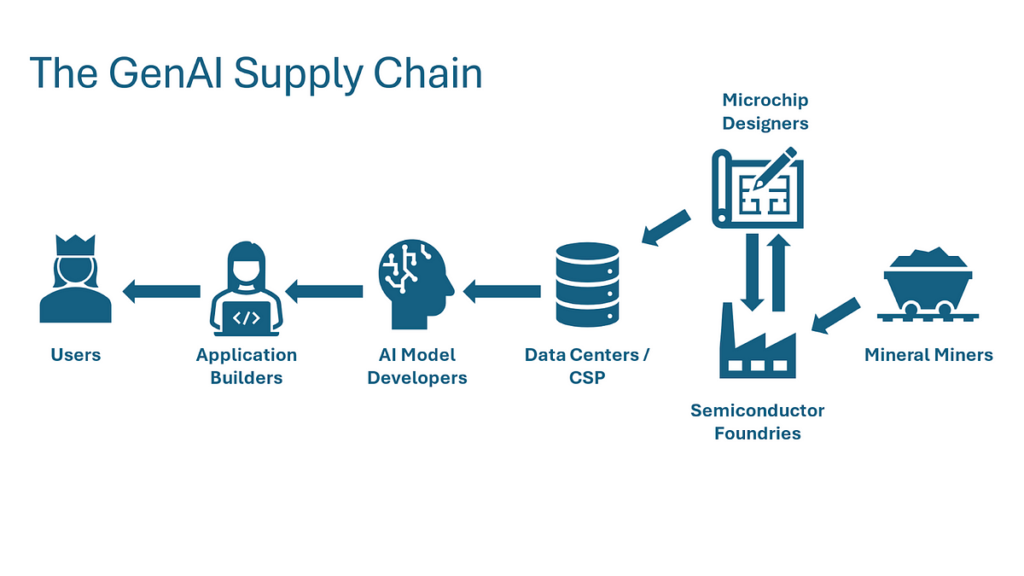

I’ve written this piece to introduce readers to the main elements of the GenAI worth chain, what position every performs, and who the main gamers are at every stage. Alongside the best way, I hope as an example the vary of companies powering the expansion of AI, how totally different applied sciences construct upon one another, and the place vulnerabilities and bottlenecks exist. Beginning with the user-facing purposes rising from expertise giants like Google and the newest batch of startups, we’ll work backward by the worth chain right down to the sand and uncommon earth metals that go into laptop chips.

Expertise giants, company IT departments, and legions of recent startups are within the early phases of experimenting with potential use circumstances for GenAI. These purposes often is the begin of a brand new paradigm in laptop purposes, marked by radical new methods of human-computer interplay and unprecedented capabilities to grasp and leverage unstructured and beforehand untapped knowledge sources (e.g., audio).

Most of the most impactful advances in computing have come from advances in human-computer interplay (HCI). From the event of the GUI to the mouse to the contact display, these advances have vastly expanded the leverage customers achieve from computing instruments. GenAI fashions will additional take away friction from this interface by equipping computer systems with the ability and suppleness of human language. Customers will have the ability to difficulty directions and duties to computer systems simply as they may a dependable human assistant. Some examples of merchandise innovating within the HCI area are:

- Siri (AI Voice Assistant) — Enhances Apple’s cell assistant with the potential to grasp broader requests and questions

- Palantir’s AIP (Autonomous Brokers) — Strips complexity from giant highly effective instruments by a chat interface that directs customers to the specified performance and actions

- Lilac Labs (Buyer Service Automation) — Automates drive-through buyer ordering with voice AI

GenAI equips laptop methods with company and suppleness that was beforehand inconceivable when units of preprogrammed procedures guided their performance and their knowledge inputs wanted to suit well-defined guidelines established by the programmer. This flexibility permits purposes to carry out extra advanced and open ended information duties that had been beforehand strictly within the human area. Some examples of recent purposes leveraging this flexibility are:

- GitHub Copilot (Coding Assistant) — Amplifies programmer productiveness by implementing code primarily based on the consumer’s intent and current code base

- LenAI (Information Assistant) — Saves information employees time by summarizing conferences, extracting essential insights from discussions, and drafting communications

- Perplexity (AI Search) — Solutions consumer questions reliably with citations by synthesizing conventional web searches with AI-generated summaries of web sources

A various group of gamers is driving the event of those use circumstances. Hordes of startups are bobbing up, with 86 of Y Combinator’s W24 batch focused on AI technologies. Main tech corporations like Google have additionally launched GenAI merchandise and options. For example, Google is leveraging its Gemini LLM to summarize leads to its core search merchandise. Conventional enterprises are launching main initiatives to grasp how GenAI can complement their technique and operations. JP Morgan CEO Jamie Dimon said AI is “unbelievable for advertising, danger, fraud. It’ll assist you to do your job higher.” As corporations perceive how AI can clear up issues and drive worth, use circumstances and demand for GenAI will multiply.

With the discharge of OpenAI’s ChatGPT (powered by the GPT-3.5 mannequin) in late 2022, GenAI exploded into the public consciousness. As we speak, fashions like Claude (Anthropic), Gemini (Google), and Llama (Meta) have challenged GPT for supremacy. The mannequin supplier market and growth panorama are nonetheless of their infancy, and plenty of open questions stay, reminiscent of:

- Will smaller area/task-specific fashions proliferate, or will giant fashions deal with all duties?

- How far can mannequin sophistication and functionality advance underneath the present transformer structure?

- How will capabilities advance as mannequin coaching approaches the restrict of all human-created textual content knowledge?

- Which gamers will problem the present supremacy of OpenAI?

Whereas speculating in regards to the functionality limits of synthetic intelligence is past the scope of this dialogue, the marketplace for GenAI fashions is probably going giant (many prominent investors certainly value it highly). What do mannequin builders do to justify such excessive valuations and a lot pleasure?

The analysis groups at corporations like OpenAI are chargeable for making architectural selections, compiling and preprocessing coaching datasets, managing coaching infrastructure, and extra. Analysis scientists on this discipline are uncommon and extremely valued; with the average engineer at OpenAI earning over $900k. Not many corporations can appeal to and retain folks with this extremely specialised skillset required to do that work.

Compiling the coaching datasets includes crawling, compiling, and processing all textual content (or audio or visible) knowledge obtainable on the web and different sources (e.g., digitized libraries). After compiling these uncooked datasets, engineers layer in related metadata (e.g., tagging classes), tokenize knowledge into chunks for mannequin processing, format knowledge into environment friendly coaching file codecs, and impose high quality management measures.

Whereas the marketplace for AI model-powered services may be worth trillions within a decade, many boundaries to entry stop all however essentially the most well-resourced corporations from constructing cutting-edge fashions. The very best barrier to entry is the tens of millions to billions of capital funding required for mannequin coaching. To coach the newest fashions, corporations should both assemble their very own knowledge facilities or make important purchases from cloud service suppliers to leverage their knowledge facilities. Whereas Moore’s legislation continues to quickly decrease the worth of computing energy, that is greater than offset by the fast scale up in mannequin sizes and computation necessities. Coaching the newest cutting-edge fashions requires billions in knowledge middle funding (in March 2024, media stories described an investment of $100B by OpenAI and Microsoft on knowledge facilities to coach subsequent gen fashions). Few corporations can afford to allocate billions towards coaching an AI mannequin (solely tech giants or exceedingly well-funded startups like Anthropic and Safe Superintelligence).

Discovering the precise expertise can be extremely troublesome. Attracting this specialised expertise requires greater than a 7-figure compensation bundle; it requires connections with the precise fields and tutorial communities, and a compelling worth proposition and imaginative and prescient for the expertise’s future. Current gamers’ excessive entry to capital and domination of the specialised expertise market will make it troublesome for brand new entrants to problem their place.

Understanding a bit in regards to the historical past of the AI mannequin market helps us perceive the present panorama and the way the market might evolve. When ChatGPT burst onto the scene, it felt like a breakthrough revolution to many, however was it? Or was it one other incremental (albeit spectacular) enchancment in a protracted collection of advances that had been invisible exterior of the event world? The staff that developed ChatGPT constructed upon a long time of analysis and publicly obtainable instruments from business, academia, and the open-source neighborhood. Most notable is the transformer structure itself — the essential perception driving not simply ChatGPT, however most AI breakthroughs prior to now 5 years. First proposed by Google of their 2017 paper Attention is All You Need, the transformer structure is the muse for fashions like Secure Diffusion, GPT-4, and Midjourney. The authors of that 2017 paper have founded some of the most prominent AI startups (e.g., CharacterAI, Cohere).

Given the widespread transformer structure, what’s going to allow some fashions to “win” towards others? Variables like mannequin measurement, enter knowledge high quality/amount, and proprietary analysis differentiate fashions. Mannequin measurement has proven to correlate with improved efficiency, and the very best funded gamers may differentiate by investing extra in mannequin coaching to additional scale up their fashions. Proprietary knowledge sources (reminiscent of these possessed by Meta from its consumer base and Elon Musk’s xAI from Tesla’s driving movies) may assist some fashions study what different fashions don’t have entry to. GenAI continues to be a extremely lively space of ongoing analysis — analysis breakthroughs at corporations with the very best expertise will partially decide the tempo of development. It’s additionally unclear how methods and use circumstances will create alternatives for various gamers. Maybe software builders leverage a number of fashions to scale back dependency danger or to align a mannequin’s distinctive strengths with particular use circumstances (e.g., analysis, interpersonal communications).

We mentioned how mannequin suppliers make investments billions to construct or hire computing assets to coach these fashions. The place is that spending going? A lot of it goes to cloud service suppliers like Microsoft’s Azure (utilized by OpenAI for GPT) and Amazon Net Providers (utilized by Anthropic for Claude).

Cloud service suppliers (CSPs) play a vital position within the GenAI worth chain by offering the required infrastructure for mannequin coaching (in addition they typically present infrastructure to the top software builders, however this part will concentrate on their interactions with the mannequin builders). Main mannequin builders primarily don’t personal and function their very own computing services (often known as knowledge facilities). As a substitute, they hire huge quantities of computing energy from the hyper-scaler CSPs (AWS, Azure, and Google Cloud) and different suppliers.

CSPs produce the useful resource computing energy (manufactured by inputting electrical energy to a specialised microchip, 1000’s of which comprise a knowledge middle). To coach their fashions, engineers present the computer systems operated by CSPs with directions to make computationally costly matrix calculations over their enter datasets to calculate billions of parameters of mannequin weights. This mannequin coaching part is chargeable for the excessive upfront value of funding. As soon as these weights are calculated (i.e., the mannequin is educated), mannequin suppliers use these parameters to reply to consumer queries (i.e., make predictions on a novel dataset). This can be a much less computationally costly course of often known as inference, additionally accomplished utilizing CSP computing energy.

The cloud service supplier’s position is constructing, sustaining, and administering knowledge facilities the place this “computing energy” useful resource is produced and utilized by mannequin builders. CSP actions embody buying laptop chips from suppliers like Nvidia, “racking and stacking” server items in specialised services, and performing common bodily and digital upkeep. Additionally they develop the whole software program stack to handle these servers and supply builders with an interface to entry the computing energy and deploy their purposes.

The principal working expense for knowledge facilities is electrical energy, with AI-fueled knowledge middle growth prone to drive a major improve in electrical energy utilization within the coming a long time. For perspective, a regular question to ChatGPT makes use of ten occasions as a lot power as a mean Google Search. Goldman Sachs estimates that AI demand will double the data center’s share of global electricity usage by the last decade’s finish. Simply as important investments have to be made in computing infrastructure to assist AI, comparable investments have to be made to energy this computing infrastructure.

Trying forward, cloud service suppliers and their mannequin builder companions are in a race to assemble the biggest and strongest knowledge facilities able to coaching the subsequent era fashions. The information facilities of the long run, like these underneath growth by the partnership of Microsoft and OpenAI, would require 1000’s to tens of millions of recent cutting-edge microchips. The substantial capital expenditures by cloud service suppliers to assemble these services are actually driving file income on the corporations that assist construct these microchips, notably Nvidia (design) and TSMC (manufacturing).

At this level, everybody’s seemingly heard of Nvidia and its meteoric, AI-fueled inventory market rise. It’s turn into a cliche to say that the tech giants are locked in an arms race and Nvidia is the one provider, however is it true? For now, it’s. Nvidia designs a type of computer microchip often known as a graphical processing unit (GPU) that’s essential for AI mannequin coaching. What’s a GPU, and why is it so essential for GenAI? Why are most conversations in AI chip design centered round Nvidia and never different microchip designers like Intel, AMD, or Qualcomm?

Graphical processing items (because the title suggests) had been initially used to serve the pc graphics market. Graphics for CGI films like Jurassic Park and video video games like Doom require costly matrix computations, however these computations could be accomplished in parallel relatively than in collection. Commonplace laptop processors (CPUs) are optimized for quick sequential computation (the place the enter to at least one step may very well be output from a previous step), however they can’t do giant numbers of calculations in parallel. This optimization for “horizontally” scaled parallel computation relatively than accelerated sequential computation was well-suited for laptop graphics, and it additionally got here to be excellent for AI coaching.

Given GPUs served a distinct segment market till the rise of video video games within the late 90s, how did they arrive to dominate the AI {hardware} market, and the way did GPU makers displace Silicon Valley’s unique titans like Intel? In 2012, this system AlexNet received the ImageNet machine studying competitors by utilizing Nvidia GPUs to speed up mannequin coaching. They confirmed that the parallel computation energy of GPUs was excellent for coaching ML fashions as a result of like laptop graphics, ML mannequin coaching relied on extremely parallel matrix computations. As we speak’s LLMs have expanded upon AlexNet’s preliminary breakthrough to scale as much as quadrillions of arithmetic computations and billions of mannequin parameters. With this explosion in parallel computing demand since AlexNet, Nvidia has positioned itself as the one potential chip for machine studying and AI mannequin coaching because of heavy upfront funding and intelligent lock-in methods.

Given the large advertising alternative in GPU design, it’s affordable to ask why Nvidia has no important challengers (on the time of this writing, Nvidia holds 70–95% of the AI chip market share). Nvidia’s early investments within the ML and AI market earlier than ChatGPT and earlier than even AlexNet had been key in establishing a hefty lead over different chipmakers like AMD. Nvidia allotted important funding in analysis and growth for the scientific computing (to turn into ML and AI) market phase earlier than there was a transparent industrial use case. Due to these early investments, Nvidia had already developed the very best provider and buyer relationships, engineering expertise, and GPU expertise when the AI market took off.

Maybe Nvidia’s most important early funding and now its deepest moat towards rivals is its CUDA programming platform. CUDA is a low-level software program instrument that allows engineers to interface with Nvidia’s chips and write parallel native algorithms. Many fashions, reminiscent of LlaMa, leverage higher-level Python libraries constructed upon these foundational CUDA instruments. These decrease stage instruments allow mannequin designers to concentrate on higher-level structure design selections with out worrying in regards to the complexities of executing calculations on the GPU processor core stage. With CUDA, Nvidia constructed a software program answer to strategically complement their {hardware} GPU merchandise by fixing many software program challenges AI builders face.

CUDA not solely simplifies the method of constructing parallelized AI and machine studying fashions on Nvidia chips, it additionally locks builders onto the Nvidia system, elevating important boundaries to exit for any corporations trying to change to Nvidia’s rivals. Applications written in CUDA can’t run on competitor chips, which signifies that to modify off Nvidia chips, corporations should rebuild not simply the performance of the CUDA platform, they need to additionally rebuild any components of their tech stack depending on CUDA outputs. Given the large stack of AI software program constructed upon CUDA over the previous decade, there’s a substantial switching value for anybody trying to transfer to rivals’ chips.

Firms like Nvidia and AMD design chips, however they don’t manufacture them. As a substitute, they depend on semiconductor manufacturing specialists often known as foundries. Fashionable semiconductor manufacturing is without doubt one of the most advanced engineering processes ever invented, and these foundries are a great distance from most individuals’s picture of a standard manufacturing facility. For example, transistors on the newest chips are solely 12 Silicon atoms lengthy, shorter than the wavelength of seen gentle. Fashionable microchips have trillions of those transistors packed onto small silicon wafers and etched into atom-scale built-in circuits.

The important thing to manufacturing semiconductors is a course of often known as photolithography. Photolithography includes etching intricate patterns on a silicon wafer, a crystalized type of the component silicon used as the bottom for the microchip. The method includes coating the wafer with a light-sensitive chemical known as photoresist after which exposing it to ultraviolet gentle by a masks that incorporates the specified circuit. The uncovered areas of the photoresist are then developed, leaving a sample that may be etched into the wafer. Essentially the most essential machines for this course of are developed by the Dutch firm ASML, which produces extreme ultraviolet (EUV) lithography systems and holds an identical stranglehold to Nvidia in its phase of the AI worth chain.

Simply as Nvidia got here to dominate the GPU design market, its main manufacturing companion, Taiwan Semiconductor Manufacturing Firm (TSMC), holds a equally giant share of the manufacturing marketplace for essentially the most superior AI chips. To know TSMC’s place within the semiconductor manufacturing panorama, it’s useful to grasp the broader foundry panorama.

Semiconductor producers are cut up between two principal foundry fashions: pure-play and built-in. Pure-play foundries, reminiscent of TSMC and GlobalFoundries, focus solely on manufacturing microchips for different corporations with out designing their very own chips (the complement to fabless corporations like Nvidia and AMD, who design however don’t manufacture their chips). These foundries focus on fabrication companies, permitting fabless semiconductor corporations to design microchips with out heavy capital expenditures in manufacturing services. In distinction, built-in machine producers (IDMs) like Intel and Samsung design, manufacture, and promote their chips. The built-in mannequin offers better management over the whole manufacturing course of however requires important funding in each design and manufacturing capabilities. The pure-play mannequin has gained recognition in latest a long time as a result of flexibility and capital effectivity it presents fabless designers, whereas the built-in mannequin continues to be advantageous for corporations with the assets to take care of design and fabrication experience.

It’s inconceivable to debate semiconductor manufacturing with out contemplating the important position of Taiwan and the resultant geopolitical dangers. Within the late twentieth century, Taiwan reworked itself from a low-margin, low-skilled manufacturing island right into a semiconductor powerhouse, largely attributable to strategic authorities investments and a concentrate on high-tech industries. The institution and progress of TSMC have been central to this transformation, positioning Taiwan on the coronary heart of the worldwide expertise provide chain and resulting in the outgrowth of many smaller corporations to assist manufacturing. Nonetheless, this dominance has additionally made Taiwan a essential focus within the ongoing geopolitical battle, as China views the island as a breakaway province and seeks better management. Any escalation of tensions may disrupt the worldwide provide of semiconductors, with far-reaching penalties for the worldwide financial system, significantly in AI.

On the most simple stage, all manufactured objects are created from uncooked supplies extracted from the earth. For microchips used to coach AI fashions, silicon and metals are their main constituents. These and the chemical compounds used within the photolithography course of are the first inputs utilized by foundries to fabricate semiconductors. Whereas the US and its allies have come to dominate many components of the worth chain, its AI rival, China, has a firmer grasp on uncooked metals and different inputs.

The first ingredient in any microchip is silicon (therefore the title Silicon Valley). Silicon is without doubt one of the most considerable minerals within the earth’s crust and is usually mined as Silica Dioxide (i.e., quartz or silica sand). Producing silicon wafers includes mining mineral quartzite, crushing it, after which extracting and purifying the fundamental silicon. Subsequent, chemical corporations reminiscent of Sumco and Shin-Etsu Chemical convert pure silicon to wafers utilizing a course of known as Czochralski progress, through which a seed crystal is dipped into molten high-purity silicon and slowly pulled upwards whereas rotating. This course of creates a sizeable single-crystal silicon ingot sliced into skinny wafers, which type the substrate for semiconductor manufacturing.

Past Silicon, laptop chips additionally require hint quantities of uncommon earth metals. A essential step in semiconductor manufacturing is doping, through which impurities are added to the silicon to regulate conductivity. Doping is often accomplished with uncommon earth metals like Germanium, Arsenic, Gallium, and Copper. China dominates the worldwide uncommon earth steel manufacturing, accounting for over 60% of mining and 85% of processing. Different important uncommon earth metals producers embody Australia, the US, Myanmar, and the Democratic Republic of the Congo. The US’ heavy reliance on China for uncommon earth metals poses important geopolitical dangers, as provide disruptions may severely influence the semiconductor business and different high-tech sectors. This dependence has prompted efforts to diversify provide chains and develop home uncommon earth manufacturing capabilities within the US and different nations, although progress has been sluggish attributable to environmental issues and the advanced nature of uncommon earth processing.

The bodily and digital expertise stacks and worth chains that assist the event of AI are intricate and constructed upon a long time of educational and industrial advances. The worth chain encompasses finish software builders, AI mannequin builders, cloud service suppliers, chip designers, chip fabricators, and uncooked materials suppliers, amongst many different key contributors. Whereas a lot of the eye has been on main gamers like OpenAI, Nvidia, and TSMC, important alternatives and bottlenecks exist in any respect factors alongside the worth chain. 1000’s of recent corporations can be born to resolve these issues. Whereas corporations like Nvidia and OpenAI may be the Intel and Google of their era, the private computing and web booms produced 1000’s of different unicorns to fill niches and clear up points that got here with inventing a brand new financial system. The alternatives created by the shift to AI will take a long time to be understood and realized, a lot as in private computing within the 70s and 80s and the web within the 90s and 00s.

Whereas entrepreneurship and artful engineering might clear up many issues within the AI market, some issues contain far better forces. No problem is bigger than rising geopolitical rigidity with China, which owns (or claims to personal) a lot of the uncooked supplies and manufacturing markets. This contrasts with the US and its allies, who management most downstream phases of the chain, together with chip design and mannequin coaching. The battle for AI dominance is very important as a result of the chance unlocked by AI is not only financial but in addition navy. Semi-autonomous weapons methods and cyberwarfare brokers leveraging AI capabilities might play decisive roles in conflicts of the approaching a long time. Fashionable protection expertise startups like Palantir and Anduril already present how AI capabilities can develop battlefield visibility and speed up choice loops to achieve doubtlessly decisive benefit. Given AI’s excessive potential for disruption to the worldwide order and the fragile stability of energy between the US and China, it’s crucial that the 2 nations search to take care of a cooperative relationship aimed toward mutually helpful growth of AI expertise for the betterment of worldwide prosperity. Solely by fixing issues throughout the availability chain, from the scientific to the economic to the geopolitical, can the promise of AI to supercharge humanity’s capabilities be realized.