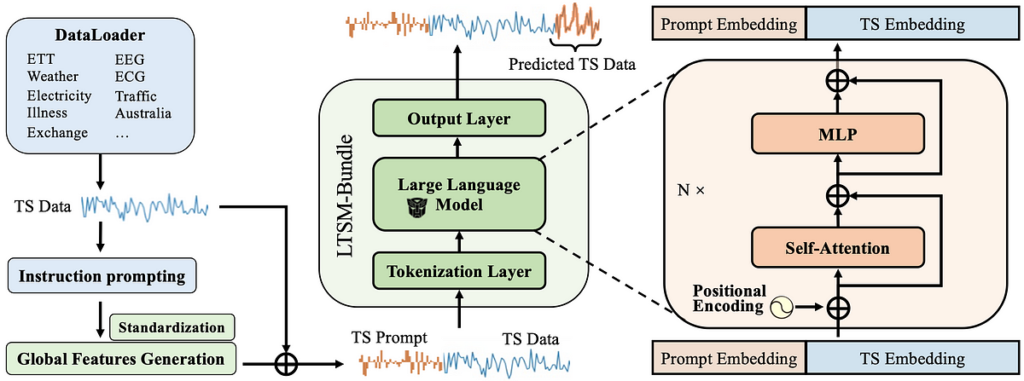

6. Bundling all these takeaways create a LTSM mannequin (LTSM-Bundle) that outperforms all current strategies that re-programming LLM for time sequence and transformer based mostly time sequence forecasting fashions.

Re-program a LTSM your self!

Wanna attempt to re-program your individual LTSM? Right here is the tutorial for the LTSM-bundle: https://github.com/daochenzha/ltsm/blob/main/tutorial/README.md

Step 1: Create a digital surroundings. Clone and set up the necessities and the repository.

conda create -n ltsm python=3.8.0

conda activate ltsm

git clone git@github.com:daochenzha/ltsm.git

cd ltsm

pip3 set up -e .

pip3 set up -r necessities.txt

Step 2: Put together your dataset. Be sure your native information folder like following:

- ltsm/

- datasets/

DATA_1.csv/

DATA_2.csv/

DATA_3.csv/

...

Step 3: Producing the time sequence prompts from coaching, validating, and testing datasets

python3 prompt_generate_split.py

Step 4: Discover the generated time sequence prompts within the ‘./prompt_data_split’ folder. Then run the next command for finalizing the prompts:

# normalizing the prompts

python3 prompt_normalization_split.py --mode match#export the prompts to the "./prompt_data_normalize_split" folder

python3 prompt_normalization_split.py --mode rework

Remaining Step: Practice your individual LTSM with Time Collection Immediate and Linear Tokenization on gpt2-medium.

python3 main_ltsm.py

--model LTSM

--model_name_or_path gpt2-medium

--train_epochs 500

--batch_size 10

--pred_len 96

--data_path "DATA_1.csv DATA_2.csv"

--test_data_path_list "DATA_3.csv"

--prompt_data_path "prompt_bank/prompt_data_normalize_split"

--freeze 0

--learning_rate 1e-3

--downsample_rate 20

--output_dir [Your_Output_Path]

Checkout extra particulars in our paper and GitHub Repo:

Paper: https://arxiv.org/pdf/2406.14045

Code: https://github.com/daochenzha/ltsm/

Reference:

[1] Liu, Pengfei, et al. “Pre-train, immediate, and predict: A scientific survey of prompting strategies in pure language processing.” ACM Computing Surveys 55.9 (2023): 1–35.

[2] Liu, Xiao, et al. “Self-supervised studying: Generative or contrastive.” IEEE transactions on data and information engineering 35.1 (2021): 857–876.

[3] Ansari, Abdul Fatir, et al. “Chronos: Studying the language of time sequence.” arXiv preprint arXiv:2403.07815 (2024).