OpenAI recently announced assist for Structured Outputs in its newest gpt-4o-2024–08–06 fashions. Structured outputs in relation to massive language fashions (LLMs) are nothing new — builders have both used numerous immediate engineering strategies, or third get together instruments.

On this article we’ll clarify what structured outputs are, how they work, and how one can apply them in your personal LLM based mostly functions. Though OpenAI’s announcement makes it fairly simple to implement utilizing their APIs (as we’ll show right here), it’s possible you’ll wish to as an alternative go for the open supply Outlines package deal (maintained by the stunning people over at dottxt), since it may be utilized to each the self-hosted open-weight fashions (e.g. Mistral and LLaMA), in addition to the proprietary APIs (Disclaimer: attributable to this issue Outlines doesn’t as of this writing assist structured JSON era by way of OpenAI APIs; however that may change quickly!).

If RedPajama dataset is any indication, the overwhelming majority of pre-training information is human textual content. Subsequently “pure language” is the native area of LLMs — each within the enter, in addition to the output. Once we construct functions nevertheless, we wish to use machine-readable formal buildings or schemas to encapsulate our information enter/output. This manner we construct robustness and determinism into our functions.

Structured Outputs is a mechanism by which we implement a pre-defined schema on the LLM output. This sometimes signifies that we implement a JSON schema, nevertheless it isn’t restricted to JSON solely — we may in precept implement XML, Markdown, or a totally custom-made schema. The advantages of Structured Outputs are two-fold:

- Less complicated immediate design — we want not be overly verbose when specifying how the output ought to seem like

- Deterministic names and kinds — we will assure to acquire for instance, an attribute

agewith aQuantityJSON type within the LLM response

For this instance, we’ll use the primary sentence from Sam Altman’s Wikipedia entry…

Samuel Harris Altman (born April 22, 1985) is an American entrepreneur and investor finest often known as the CEO of OpenAI since 2019 (he was briefly fired and reinstated in November 2023).

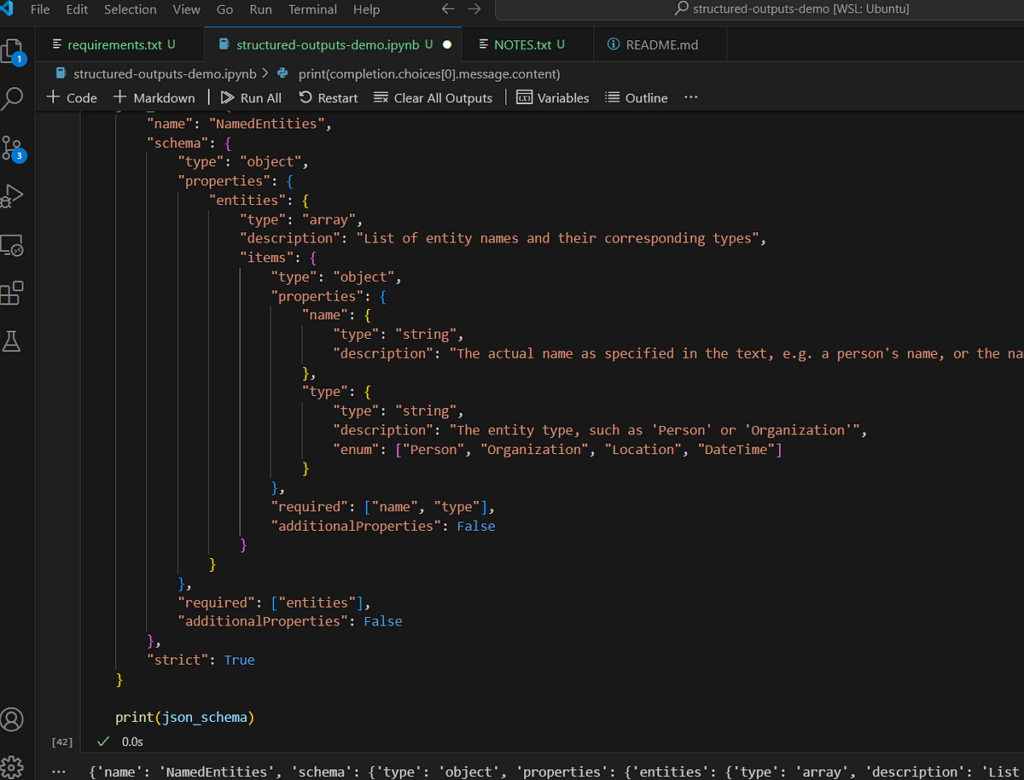

…and we’re going to use the newest GPT-4o checkpoint as a named-entity recognition (NER) system. We are going to implement the next JSON schema:

json_schema = {

"identify": "NamedEntities",

"schema": {

"sort": "object",

"properties": {

"entities": {

"sort": "array",

"description": "Checklist of entity names and their corresponding varieties",

"objects": {

"sort": "object",

"properties": {

"identify": {

"sort": "string",

"description": "The precise identify as specified within the textual content, e.g. an individual's identify, or the identify of the nation"

},

"sort": {

"sort": "string",

"description": "The entity sort, resembling 'Particular person' or 'Group'",

"enum": ["Person", "Organization", "Location", "DateTime"]

}

},

"required": ["name", "type"],

"additionalProperties": False

}

}

},

"required": ["entities"],

"additionalProperties": False

},

"strict": True

}

In essence, our LLM response ought to comprise a NamedEntities object, which consists of an array of entities, every one containing a identify and sort. There are some things to notice right here. We are able to for instance implement Enum sort, which may be very helpful in NER since we will constrain the output to a set set of entity varieties. We should specify all of the fields within the required array: nevertheless, we will additionally emulate “elective” fields by setting the sort to e.g. ["string", null] .

We are able to now cross our schema, along with the info and the directions to the API. We have to populate the response_format argument with a dict the place we set sort to "json_schema” after which provide the corresponding schema.

completion = consumer.beta.chat.completions.parse(

mannequin="gpt-4o-2024-08-06",

messages=[

{

"role": "system",

"content": """You are a Named Entity Recognition (NER) assistant.

Your job is to identify and return all entity names and their

types for a given piece of text. You are to strictly conform

only to the following entity types: Person, Location, Organization

and DateTime. If uncertain about entity type, please ignore it.

Be careful of certain acronyms, such as role titles "CEO", "CTO",

"VP", etc - these are to be ignore.""",

},

{

"role": "user",

"content": s

}

],

response_format={

"sort": "json_schema",

"json_schema": json_schema,

}

)

The output ought to look one thing like this:

{ 'entities': [ {'name': 'Samuel Harris Altman', 'type': 'Person'},

{'name': 'April 22, 1985', 'type': 'DateTime'},

{'name': 'American', 'type': 'Location'},

{'name': 'OpenAI', 'type': 'Organization'},

{'name': '2019', 'type': 'DateTime'},

{'name': 'November 2023', 'type': 'DateTime'}]}

The complete supply code used on this article is offered here.

The magic is within the mixture of constrained sampling, and context free grammar (CFG). We talked about beforehand that the overwhelming majority of pre-training information is “pure language”. Statistically because of this for each decoding/sampling step, there’s a non-negligible likelihood of sampling some arbitrary token from the realized vocabulary (and in trendy LLMs, vocabularies sometimes stretch throughout 40 000+ tokens). Nevertheless, when coping with formal schemas, we would love to quickly remove all inconceivable tokens.

Within the earlier instance, if we’ve already generated…

{ 'entities': [ {'name': 'Samuel Harris Altman',

…then ideally we would like to place a very high logit bias on the 'typ token in the next decoding step, and very low probability on all the other tokens in the vocabulary.

This is in essence what happens. When we supply the schema, it gets converted into a formal grammar, or CFG, which serves to guide the logit bias values during the decoding step. CFG is one of those old-school computer science and natural language processing (NLP) mechanisms that is making a comeback. A very nice introduction to CFG was actually presented in this StackOverflow answer, but essentially it is a way of describing transformation rules for a collection of symbols.

Structured Outputs are nothing new, but are certainly becoming top-of-mind with proprietary APIs and LLM services. They provide a bridge between the erratic and unpredictable “natural language” domain of LLMs, and the deterministic and structured domain of software engineering. Structured Outputs are essentially a must for anyone designing complex LLM applications where LLM outputs must be shared or “presented” in various components. While API-native support has finally arrived, builders should also consider using libraries such as Outlines, as they provide a LLM/API-agnostic way of dealing with structured output.