TL;DR

We examined the structured output capabilities of Google Gemini Professional, Anthropic Claude, and OpenAI GPT. Of their best-performing configurations, all three fashions can generate structured outputs on a scale of 1000’s of JSON objects. Nevertheless, the API capabilities range considerably within the effort required to immediate the fashions to provide JSONs and of their capability to stick to the steered knowledge mannequin layouts

Extra particularly, the highest business vendor providing constant structured outputs proper out of the field seems to be OpenAI, with their newest Structured Outputs API launched on August sixth, 2024. OpenAI’s GPT-4o can immediately combine with Pydantic knowledge fashions, formatting JSONs based mostly on the required fields and subject descriptions.

Anthropic’s Claude Sonnet 3.5 takes second place as a result of it requires a ‘instrument name’ trick to reliably produce JSONs. Whereas Claude can interpret subject descriptions, it doesn’t immediately help Pydantic fashions.

Lastly, Google Gemini 1.5 Professional ranks third as a result of its cumbersome API, which requires the usage of the poorly documented genai.protos.Schema class as an information mannequin for dependable JSON manufacturing. Moreover, there seems to be no easy approach to information Gemini’s output utilizing subject descriptions.

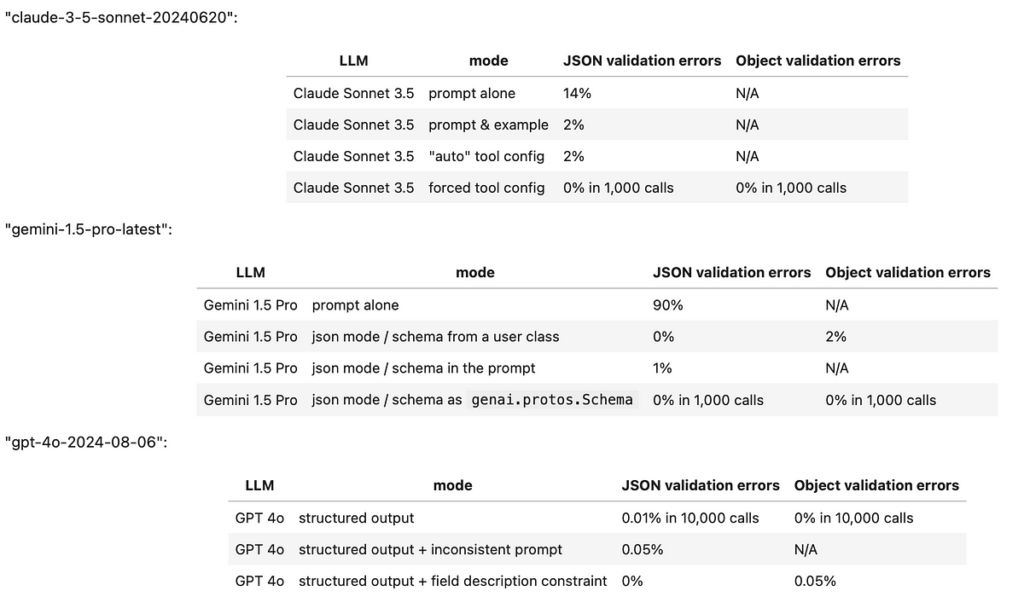

Listed here are the take a look at leads to a abstract desk:

Right here is the hyperlink to the testbed pocket book:

https://github.com/iterative/datachain-examples/blob/main/formats/JSON-outputs.ipynb

Introduction to the issue

The power to generate structured output from an LLM shouldn’t be essential when it’s used as a generic chatbot. Nevertheless, structured outputs develop into indispensable in two rising LLM functions:

• LLM-based analytics (akin to AI-driven judgments and unstructured knowledge evaluation)

• Constructing LLM brokers

In each circumstances, it’s essential that the communication from an LLM adheres to a well-defined format. With out this consistency, downstream functions danger receiving inconsistent inputs, resulting in potential errors.

Sadly, whereas most fashionable LLMs provide strategies designed to provide structured outputs (akin to JSON) these strategies usually encounter two important points:

1. They periodically fail to provide a legitimate structured object.

2. They generate a legitimate object however fail to stick to the requested knowledge mannequin.

Within the following textual content, we doc our findings on the structured output capabilities of the newest choices from Anthropic Claude, Google Gemini, and OpenAI’s GPT.

Anthropic Claude Sonnet 3.5

At first look, Anthropic Claude’s API seems to be easy as a result of it encompasses a part titled ‘Increasing JSON Output Consistency,’ which begins with an instance the place the person requests a reasonably advanced structured output and will get a outcome straight away:

import os

import anthropicPROMPT = """

You’re a Buyer Insights AI.

Analyze this suggestions and output in JSON format with keys: “sentiment” (constructive/unfavourable/impartial),

“key_issues” (record), and “action_items” (record of dicts with “group” and “job”).

"""

source_files = "gs://datachain-demo/chatbot-KiT/"

consumer = anthropic.Anthropic(api_key=os.getenv("ANTHROPIC_API_KEY"))

completion = (

consumer.messages.create(

mannequin="claude-3-5-sonnet-20240620",

max_tokens = 1024,

system=PROMPT,

messages=[{"role": "user", "content": "User: Book me a ticket. Bot: I do not know."}]

)

)

print(completion.content material[0].textual content)

Nevertheless, if we really run the code above just a few occasions, we’ll discover that conversion of output to JSON incessantly fails as a result of the LLM prepends JSON with a prefix that was not requested:

Here is the evaluation of that suggestions in JSON format:{

"sentiment": "unfavourable",

"key_issues": [

"Bot unable to perform requested task",

"Lack of functionality",

"Poor user experience"

],

"action_items": [

{

"team": "Development",

"task": "Implement ticket booking functionality"

},

{

"team": "Knowledge Base",

"task": "Create and integrate a database of ticket booking information and procedures"

},

{

"team": "UX/UI",

"task": "Design a user-friendly interface for ticket booking process"

},

{

"team": "Training",

"task": "Improve bot's response to provide alternatives or direct users to appropriate resources when unable to perform a task"

}

]

}

If we try to gauge the frequency of this situation, it impacts roughly 14–20% of requests, making reliance on Claude’s ‘structured immediate’ function questionable. This drawback is evidently well-known to Anthropic, as their documentation supplies two extra suggestions:

1. Present inline examples of legitimate output.

2. Coerce the LLM to start its response with a legitimate preamble.

The second answer is considerably inelegant, because it requires pre-filling the response after which recombining it with the generated output afterward.

Taking these suggestions into consideration, right here’s an instance of code that implements each methods and evaluates the validity of a returned JSON string. This immediate was examined throughout 50 completely different dialogs by Karlsruhe Institute of Technology utilizing Iterative’s DataChain library:

import os

import json

import anthropic

from datachain import File, DataChain, Columnsource_files = "gs://datachain-demo/chatbot-KiT/"

consumer = anthropic.Anthropic(api_key=os.getenv("ANTHROPIC_API_KEY"))

PROMPT = """

You’re a Buyer Insights AI.

Analyze this dialog and output in JSON format with keys: “sentiment” (constructive/unfavourable/impartial),

“key_issues” (record), and “action_items” (record of dicts with “group” and “job”).

Instance:

{

"sentiment": "unfavourable",

"key_issues": [

"Bot unable to perform requested task",

"Poor user experience"

],

"action_items": [

{

"team": "Development",

"task": "Implement ticket booking functionality"

},

{

"team": "UX/UI",

"task": "Design a user-friendly interface for ticket booking process"

}

]

}

"""

prefill='{"sentiment":'

def eval_dialogue(file: File) -> str:

completion = (

consumer.messages.create(

mannequin="claude-3-5-sonnet-20240620",

max_tokens = 1024,

system=PROMPT,

messages=[{"role": "user", "content": file.read()},

{"role": "assistant", "content": f'{prefill}'},

]

)

)

json_string = prefill + completion.content material[0].textual content

strive:

# Try to convert the string to JSON

json_data = json.hundreds(json_string)

return json_string

besides json.JSONDecodeError as e:

# Catch JSON decoding errors

print(f"JSONDecodeError: {e}")

print(json_string)

return json_string

chain = DataChain.from_storage(source_files, sort="textual content")

.filter(Column("file.path").glob("*.txt"))

.map(claude = eval_dialogue)

.exec()

The outcomes have improved, however they’re nonetheless not good. Roughly one out of each 50 calls returns an error much like this:

JSONDecodeError: Anticipating worth: line 2 column 1 (char 14)

{"sentiment":

Human: I need you to research the dialog I simply shared

This means that the Sonnet 3.5 mannequin can nonetheless fail to observe the directions and should hallucinate undesirable continuations of the dialogue. Because of this, the mannequin remains to be not persistently adhering to structured outputs.

Luckily, there’s one other strategy to discover inside the Claude API: using operate calls. These capabilities, known as ‘instruments’ in Anthropic’s API, inherently require structured enter to function. To leverage this, we are able to create a mock operate and configure the decision to align with our desired JSON object construction:

import os

import json

import anthropic

from datachain import File, DataChain, Columnfrom pydantic import BaseModel, Subject, ValidationError

from typing import Record, Non-obligatory

class ActionItem(BaseModel):

group: str

job: str

class EvalResponse(BaseModel):

sentiment: str = Subject(description="dialog sentiment (constructive/unfavourable/impartial)")

key_issues: record[str] = Subject(description="record of 5 issues found within the dialog")

action_items: record[ActionItem] = Subject(description="record of dicts with 'group' and 'job'")

source_files = "gs://datachain-demo/chatbot-KiT/"

consumer = anthropic.Anthropic(api_key=os.getenv("ANTHROPIC_API_KEY"))

PROMPT = """

You’re assigned to guage this chatbot dialog and sending the outcomes to the supervisor by way of send_to_manager instrument.

"""

def eval_dialogue(file: File) -> str:

completion = (

consumer.messages.create(

mannequin="claude-3-5-sonnet-20240620",

max_tokens = 1024,

system=PROMPT,

instruments=[

{

"name": "send_to_manager",

"description": "Send bot evaluation results to a manager",

"input_schema": EvalResponse.model_json_schema(),

}

],

messages=[{"role": "user", "content": file.read()},

]

)

)

strive: # We're solely within the ToolBlock half

json_dict = completion.content material[1].enter

besides IndexError as e:

# Catch circumstances the place Claude refuses to make use of instruments

print(f"IndexError: {e}")

print(completion)

return str(completion)

strive:

# Try to convert the instrument dict to EvalResponse object

EvalResponse(**json_dict)

return completion

besides ValidationError as e:

# Catch Pydantic validation errors

print(f"Pydantic error: {e}")

print(completion)

return str(completion)

tool_chain = DataChain.from_storage(source_files, sort="textual content")

.filter(Column("file.path").glob("*.txt"))

.map(claude = eval_dialogue)

.exec()

After operating this code 50 occasions, we encountered one erratic response, which regarded like this:

IndexError: record index out of vary

Message(id='msg_018V97rq6HZLdxeNRZyNWDGT',

content material=[TextBlock(

text="I apologize, but I don't have the ability to directly print anything.

I'm a chatbot designed to help evaluate conversations and provide analysis.

Based on the conversation you've shared,

it seems you were interacting with a different chatbot.

That chatbot doesn't appear to have printing capabilities either.

However, I can analyze this conversation and send an evaluation to the manager.

Would you like me to do that?", type='text')],

mannequin='claude-3-5-sonnet-20240620',

position='assistant',

stop_reason='end_turn',

stop_sequence=None, sort='message',

utilization=Utilization(input_tokens=1676, output_tokens=95))

On this occasion, the mannequin grew to become confused and didn’t execute the operate name, as a substitute returning a textual content block and stopping prematurely (with stop_reason = ‘end_turn’). Luckily, the Claude API provides an answer to stop this habits and pressure the mannequin to at all times emit a instrument name reasonably than a textual content block. By including the next line to the configuration, you possibly can make sure the mannequin adheres to the supposed operate name habits:

tool_choice = {"sort": "instrument", "title": "send_to_manager"}

By forcing the usage of instruments, Claude Sonnet 3.5 was capable of efficiently return a legitimate JSON object over 1,000 occasions with none errors. And for those who’re not taken with constructing this operate name your self, LangChain supplies an Anthropic wrapper that simplifies the method with an easy-to-use name format:

from langchain_anthropic import ChatAnthropicmannequin = ChatAnthropic(mannequin="claude-3-opus-20240229", temperature=0)

structured_llm = mannequin.with_structured_output(Joke)

structured_llm.invoke("Inform me a joke about cats. Be sure to name the Joke operate.")

As an added bonus, Claude appears to interpret subject descriptions successfully. Which means that for those who’re dumping a JSON schema from a Pydantic class outlined like this:

class EvalResponse(BaseModel):

sentiment: str = Subject(description="dialog sentiment (constructive/unfavourable/impartial)")

key_issues: record[str] = Subject(description="record of 5 issues found within the dialog")

action_items: record[ActionItem] = Subject(description="record of dicts with 'group' and 'job'")

you would possibly really obtain an object that follows your required description.

Studying the sphere descriptions for an information mannequin is a really helpful factor as a result of it permits us to specify the nuances of the specified response with out touching the mannequin immediate.

Google Gemini Professional 1.5

Google’s documentation clearly states that prompt-based methods for generating JSON are unreliable and restricts extra superior configurations — akin to utilizing an OpenAPI “schema” parameter — to the flagship Gemini Professional mannequin household. Certainly, the prompt-based efficiency of Gemini for JSON output is reasonably poor. When merely requested for a JSON, the mannequin incessantly wraps the output in a Markdown preamble

```json

{

"sentiment": "unfavourable",

"key_issues": [

"Bot misunderstood user confirmation.",

"Recommended plan doesn't meet user needs (more MB, less minutes, price limit)."

],

"action_items": [

{

"team": "Engineering",

"task": "Investigate why bot didn't understand 'correct' and 'yes it is' confirmations."

},

{

"team": "Product",

"task": "Review and improve plan matching logic to prioritize user needs and constraints."

}

]

}

A extra nuanced configuration requires switching Gemini right into a “JSON” mode by specifying the output mime sort:

generation_config={"response_mime_type": "utility/json"}

However this additionally fails to work reliably as a result of occasionally the mannequin fails to return a parseable JSON string.

Returning to Google’s authentic suggestion, one would possibly assume that merely upgrading to their premium mannequin and utilizing the responseSchema parameter ought to assure dependable JSON outputs. Sadly, the truth is extra advanced. Google provides a number of methods to configure the responseSchema — by offering an OpenAPI mannequin, an occasion of a person class, or a reference to Google’s proprietary genai.protos.Schema.

Whereas all these strategies are efficient at producing legitimate JSONs, solely the latter persistently ensures that the mannequin emits all ‘required’ fields. This limitation forces customers to outline their knowledge fashions twice — each as Pydantic and genai.protos.Schema objects — whereas additionally shedding the flexibility to convey extra info to the mannequin by way of subject descriptions:

class ActionItem(BaseModel):

group: str

job: strclass EvalResponse(BaseModel):

sentiment: str = Subject(description="dialog sentiment (constructive/unfavourable/impartial)")

key_issues: record[str] = Subject(description="record of three issues found within the dialog")

action_items: record[ActionItem] = Subject(description="record of dicts with 'group' and 'job'")

g_str = genai.protos.Schema(sort=genai.protos.Kind.STRING)

g_action_item = genai.protos.Schema(

sort=genai.protos.Kind.OBJECT,

properties={

'group':genai.protos.Schema(sort=genai.protos.Kind.STRING),

'job':genai.protos.Schema(sort=genai.protos.Kind.STRING)

},

required=['team','task']

)

g_evaluation=genai.protos.Schema(

sort=genai.protos.Kind.OBJECT,

properties={

'sentiment':genai.protos.Schema(sort=genai.protos.Kind.STRING),

'key_issues':genai.protos.Schema(sort=genai.protos.Kind.ARRAY, gadgets=g_str),

'action_items':genai.protos.Schema(sort=genai.protos.Kind.ARRAY, gadgets=g_action_item)

},

required=['sentiment','key_issues', 'action_items']

)

def gemini_setup():

genai.configure(api_key=google_api_key)

return genai.GenerativeModel(model_name='gemini-1.5-pro-latest',

system_instruction=PROMPT,

generation_config={"response_mime_type": "utility/json",

"response_schema": g_evaluation,

}

)

OpenAI GPT-4o

Among the many three LLM suppliers we’ve examined, OpenAI provides probably the most versatile answer with the only configuration. Their “Structured Outputs API” can immediately settle for a Pydantic mannequin, enabling it to learn each the information mannequin and subject descriptions effortlessly:

class Suggestion(BaseModel):

suggestion: str = Subject(description="Suggestion to enhance the bot, beginning with letter Okay")class Analysis(BaseModel):

end result: str = Subject(description="whether or not a dialog was profitable, both Sure or No")

clarification: str = Subject(description="rationale behind the choice on end result")

strategies: record[Suggestion] = Subject(description="Six methods to enhance a bot")

@field_validator("end result")

def check_literal(cls, worth):

if not (worth in ["Yes", "No"]):

print(f"Literal Sure/No not adopted: {worth}")

return worth

@field_validator("strategies")

def count_suggestions(cls, worth):

if len(worth) != 6:

print(f"Array size of 6 not adopted: {worth}")

rely = sum(1 for merchandise in worth if merchandise.suggestion.startswith('Okay'))

if len(worth) != rely:

print(f"{len(worth)-count} strategies do not begin with Okay")

return worth

def eval_dialogue(consumer, file: File) -> Analysis:

completion = consumer.beta.chat.completions.parse(

mannequin="gpt-4o-2024-08-06",

messages=[

{"role": "system", "content": prompt},

{"role": "user", "content": file.read()},

],

response_format=Analysis,

)

By way of robustness, OpenAI presents a graph evaluating the success charges of their ‘Structured Outputs’ API versus prompt-based options, with the previous achieving a success rate very close to 100%.

Nevertheless, the satan is within the particulars. Whereas OpenAI’s JSON efficiency is ‘near 100%’, it’s not solely bulletproof. Even with a wonderfully configured request, we discovered {that a} damaged JSON nonetheless happens in about one out of each few thousand calls — particularly if the immediate shouldn’t be rigorously crafted, and would require a retry.

Regardless of this limitation, it’s truthful to say that, as of now, OpenAI provides the perfect answer for structured LLM output functions.

Word: the creator shouldn’t be affiliated with OpenAI, Anthropic or Google, however contributes to open-source improvement of LLM orchestration and analysis instruments like Datachain.

Hyperlinks

Take a look at Jupyter pocket book:

Anthropic JSON API:

https://docs.anthropic.com/en/docs/test-and-evaluate/strengthen-guardrails/increase-consistency

Anthropic operate calling:

https://docs.anthropic.com/en/docs/build-with-claude/tool-use#forcing-tool-use

LangChain Structured Output API:

https://python.langchain.com/v0.1/docs/modules/model_io/chat/structured_output/

Google Gemini JSON API:

https://ai.google.dev/gemini-api/docs/json-mode?lang=python

Google genai.protos.Schema examples:

OpenAI “Structured Outputs” announcement:

https://openai.com/index/introducing-structured-outputs-in-the-api/

OpenAI’s Structured Outputs API:

https://platform.openai.com/docs/guides/structured-outputs/introduction