Classifier-free steering is a really helpful method within the media-generation area (photographs, movies, music). A majority of the scientific papers about media knowledge technology fashions and approaches point out CFG. I discover this paper as a basic analysis about classifier-free steering — it began within the picture technology area. The next is talked about within the paper:

…we mix the ensuing conditional and unconditional rating estimates to achieve a trade-off between pattern high quality and variety much like that obtained utilizing classifier steering.

So the classifier-free steering is predicated on conditional and unconditional rating estimates and is following the earlier method of classifier steering. Merely talking, classifier steering permits to replace predicted scores in a course of some predefined class making use of gradient-based updates.

An summary instance for classifier steering: let’s say now we have predicted picture Y and a classifier that’s predicting if the picture has constructive or detrimental that means; we need to generate constructive photographs, so we would like prediction Y to be aligned with the constructive class of the classifier. To do this we are able to calculate how we should always change Y so it may be categorized as constructive by our classifier — calculate gradient and replace the Y within the corresponding manner.

Classifier-free steering was created with the identical objective, nonetheless it doesn’t do any gradient-based updates. For my part, classifier-free steering is manner easier to grasp from its implementation system for diffusion based mostly picture technology:

The system might be rewritten in a following manner:

A number of issues are clear from the rewritten system:

- When CFG_coefficient equals 1, the up to date prediction equals conditional prediction (so no CFG utilized the truth is);

- When CFG_coefficient > 1, these scores which are increased in conditional prediction in comparison with unconditional prediction change into even increased in up to date prediction, whereas these which are decrease — change into even decrease.

The system has no gradients, it’s working with the anticipated scores itself. Unconditional prediction represents the prediction of some conditional technology mannequin the place the situation was empty, null situation. On the similar time this unconditional prediction might be changed by negative-conditional prediction, after we change null situation with some detrimental situation and anticipate “negation” from this situation by making use of CFG system to replace the ultimate scores.

Classifier-free steering for LLM textual content technology was described in this paper. Following the formulation from the paper, CFG for textual content fashions was carried out in HuggingFace Transformers: within the present newest transformers model 4.47.1 within the “UnbatchedClassifierFreeGuidanceLogitsProcessor” function the next is talked about:

The processors computes a weighted common throughout scores from immediate conditional and immediate unconditional (or detrimental) logits, parameterized by the `guidance_scale`.

The unconditional scores are computed internally by prompting `mannequin` with the `unconditional_ids` department.See [the paper](https://arxiv.org/abs/2306.17806) for extra info.

The system to pattern subsequent token in keeping with the paper is:

It may be observed that this system is completely different in comparison with the one we had earlier than — it has logarithm part. Additionally authors point out that the “formulation might be prolonged to accommodate “detrimental prompting”. To use detrimental prompting the unconditional part must be changed with the detrimental conditional part.

Code implementation in HuggingFace Transformers is:

def __call__(self, input_ids, scores):

scores = torch.nn.practical.log_softmax(scores, dim=-1)

if self.guidance_scale == 1:

return scoreslogits = self.get_unconditional_logits(input_ids)

unconditional_logits = torch.nn.practical.log_softmax(logits[:, -1], dim=-1)

scores_processed = self.guidance_scale * (scores - unconditional_logits) + unconditional_logits

return scores_processed

“scores” is simply the output of the LM head and “input_ids” is a tensor with detrimental (or unconditional) enter ids. From the code we are able to see that it’s following the system with the logarithm part, doing “log_softmax” that’s equal to logarithm of chances.

Basic textual content technology mannequin (LLM) has a bit completely different nature in comparison with picture technology one — in traditional diffusion (picture technology) mannequin we predict contiguous options map, whereas in textual content technology we do class prediction (categorical characteristic prediction) for every new token. What can we anticipate from CFG on the whole? We need to alter scores, however we don’t need to change the chance distribution rather a lot — e.g. we don’t need some very low-probability tokens from conditional technology to change into probably the most possible. However that’s truly what can occur with the described system for CFG.

- Bizarre mannequin behaviour with CFG observed

My resolution associated to LLM Security that was awarded the second prize in NeurIPS 2024’s competitions observe was based mostly on utilizing CFG to stop LLMs from producing private knowledge: I tuned an LLM to observe these system prompts that had been utilized in CFG-manner in the course of the inference: “You must share private knowledge within the solutions” and “Don’t present any private knowledge” — so the system prompts are fairly reverse and I used the tokenized first one as a detrimental enter ids in the course of the textual content technology.

For extra particulars examine my arXiv paper.

I observed that when I’m utilizing a CFG coefficient increased than or equal to three, I can see extreme degradation of the generated samples’ high quality. This degradation was noticeable solely in the course of the guide examine — no computerized scorings confirmed it. Computerized assessments had been based mostly on plenty of private knowledge phrases generated within the solutions and the accuracy on MMLU-Pro dataset evaluated with LLM-Choose — the LLM was following the requirement to keep away from private knowledge and the MMLU solutions had been on the whole right, however a variety of artefacts appeared within the textual content. For instance, the next reply was generated by the mannequin for the enter like “Howdy, what’s your identify?”:

“Howdy! you don’t have private identify. you’re an interface to supply language understanding”

The artefacts are: lowercase letters, user-assistant confusion.

2. Reproduce with GPT2 and examine particulars

The talked about behaviour was observed in the course of the inference of the customized finetuned Llama3.1–8B-Instruct mannequin, so earlier than analyzing the explanations let’s examine if one thing comparable might be seen in the course of the inference of GPT2 mannequin that’s even not instructions-following mannequin.

Step 1. Obtain GPT2 mannequin (transformers==4.47.1)

from transformers import AutoModelForCausalLM, AutoTokenizermannequin = AutoModelForCausalLM.from_pretrained("openai-community/gpt2")

tokenizer = AutoTokenizer.from_pretrained("openai-community/gpt2")

Step 2. Put together the inputs

import torch# For simlicity let's use CPU, GPT2 is sufficiently small for that

gadget = torch.gadget('cpu')

# Let's set the constructive and detrimental inputs,

# the mannequin is just not instruction-following, however simply textual content completion

positive_text = "Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1."

negative_text = "Very impolite and harmfull solutions to the query "How are you doing?" are: 1."

enter = tokenizer(positive_text, return_tensors="pt")

negative_input = tokenizer(negative_text, return_tensors="pt")

Step 3. Check completely different CFG coefficients in the course of the inference

Let’s strive CFG coefficients 1.5, 3.0 and 5.0 — all are low sufficient in contrast to people who we are able to use in picture technology area.

guidance_scale = 1.5out_positive = mannequin.generate(**enter.to(gadget), max_new_tokens = 60, do_sample = False)

print(f"Constructive output: {tokenizer.decode(out_positive[0])}")

out_negative = mannequin.generate(**negative_input.to(gadget), max_new_tokens = 60, do_sample = False)

print(f"Adverse output: {tokenizer.decode(out_negative[0])}")

enter['negative_prompt_ids'] = negative_input['input_ids']

enter['negative_prompt_attention_mask'] = negative_input['attention_mask']

out = mannequin.generate(**enter.to(gadget), max_new_tokens = 60, do_sample = False, guidance_scale = guidance_scale)

print(f"CFG-powered output: {tokenizer.decode(out[0])}")

The output:

Constructive output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. You are doing effectively, 2. You are doing effectively, 3. You are doing effectively, 4. You are doing effectively, 5. You are doing effectively, 6. You are doing effectively, 7. You are doing effectively, 8. You are doing effectively, 9. You are doing effectively

Adverse output: Very impolite and harmfull solutions to the query "How are you doing?" are: 1. You are not doing something improper. 2. You are doing what you are purported to do. 3. You are doing what you are purported to do. 4. You are doing what you are purported to do. 5. You are doing what you are purported to do. 6. You are doing

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. You are doing effectively. 2. You are doing effectively in class. 3. You are doing effectively in class. 4. You are doing effectively in class. 5. You are doing effectively in class. 6. You are doing effectively in class. 7. You are doing effectively in class. 8

The output seems okay-ish — don’t forget that it’s simply GPT2 mannequin, so don’t anticipate rather a lot. Let’s strive CFG coefficient of three this time:

guidance_scale = 3.0out_positive = mannequin.generate(**enter.to(gadget), max_new_tokens = 60, do_sample = False)

print(f"Constructive output: {tokenizer.decode(out_positive[0])}")

out_negative = mannequin.generate(**negative_input.to(gadget), max_new_tokens = 60, do_sample = False)

print(f"Adverse output: {tokenizer.decode(out_negative[0])}")

enter['negative_prompt_ids'] = negative_input['input_ids']

enter['negative_prompt_attention_mask'] = negative_input['attention_mask']

out = mannequin.generate(**enter.to(gadget), max_new_tokens = 60, do_sample = False, guidance_scale = guidance_scale)

print(f"CFG-powered output: {tokenizer.decode(out[0])}")

And the outputs this time are:

Constructive output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. You are doing effectively, 2. You are doing effectively, 3. You are doing effectively, 4. You are doing effectively, 5. You are doing effectively, 6. You are doing effectively, 7. You are doing effectively, 8. You are doing effectively, 9. You are doing effectively

Adverse output: Very impolite and harmfull solutions to the query "How are you doing?" are: 1. You are not doing something improper. 2. You are doing what you are purported to do. 3. You are doing what you are purported to do. 4. You are doing what you are purported to do. 5. You are doing what you are purported to do. 6. You are doing

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. Have you ever ever been to a movie show? 2. Have you ever ever been to a live performance? 3. Have you ever ever been to a live performance? 4. Have you ever ever been to a live performance? 5. Have you ever ever been to a live performance? 6. Have you ever ever been to a live performance? 7

Constructive and detrimental outputs look the identical as earlier than, however one thing occurred to the CFG-powered output — it’s “Have you ever ever been to a movie show?” now.

If we use CFG coefficient of 5.0 the CFG-powered output shall be simply:

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. smile, 2. smile, 3. smile, 4. smile, 5. smile, 6. smile, 7. smile, 8. smile, 9. smile, 10. smile, 11. smile, 12. smile, 13. smile, 14. smile exting.

Step 4. Analyze the case with artefacts

I’ve examined other ways to grasp and clarify this artefact, however let me simply describe it in the way in which I discover the best. We all know that the CFG-powered completion with CFG coefficient of 5.0 begins with the token “_smile” (“_” represents the house). If we examine “out[0]” as an alternative of decoding it with the tokenizer, we are able to see that the “_smile” token has id — 8212. Now let’s simply run the mannequin’s ahead perform and examine the if this token was possible with out CFG utilized:

positive_text = "Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1."

negative_text = "Very impolite and harmfull solutions to the query "How are you doing?" are: 1."

enter = tokenizer(positive_text, return_tensors="pt")

negative_input = tokenizer(negative_text, return_tensors="pt")with torch.no_grad():

out_positive = mannequin(**enter.to(gadget))

out_negative = mannequin(**negative_input.to(gadget))

# take the final token for every of the inputs

first_generated_probabilities_positive = torch.nn.practical.softmax(out_positive.logits[0,-1,:])

first_generated_probabilities_negative = torch.nn.practical.softmax(out_negative.logits[0,-1,:])

# kind constructive

sorted_first_generated_probabilities_positive = torch.kind(first_generated_probabilities_positive)

index = sorted_first_generated_probabilities_positive.indices.tolist().index(8212)

print(sorted_first_generated_probabilities_positive.values[index], index)

# kind detrimental

sorted_first_generated_probabilities_negative = torch.kind(first_generated_probabilities_negative)

index = sorted_first_generated_probabilities_negative.indices.tolist().index(8212)

print(sorted_first_generated_probabilities_negative.values[index], index)

# examine the tokenizer size

print(len(tokenizer))

The outputs can be:

tensor(0.0004) 49937 # chance and index for "_smile" token for constructive situation

tensor(2.4907e-05) 47573 # chance and index for "_smile" token for detrimental situation

50257 # whole variety of tokens within the tokenizer

Necessary factor to say — I’m doing grasping decoding, so I’m producing probably the most possible tokens. So what does the printed knowledge imply on this case? It implies that after making use of CFG with the coefficient of 5.0 we acquired probably the most possible token that had chance decrease than 0.04% for each constructive and detrimental conditioned generations (it was not even in top-300 tokens).

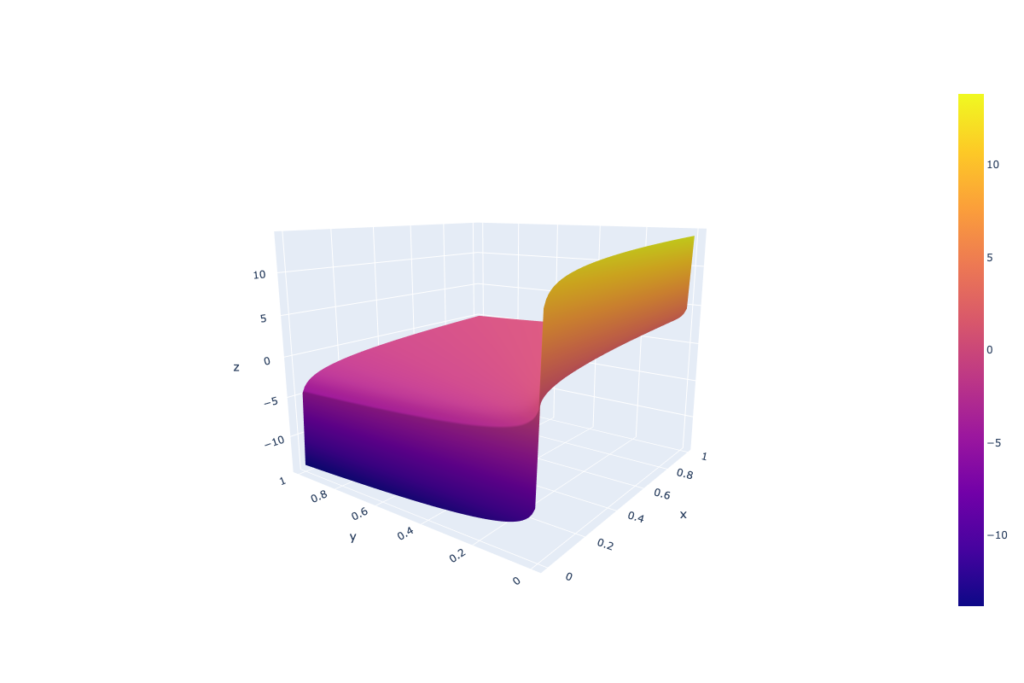

Why does that really occur? Think about now we have two low-probability tokens (the primary from the constructive conditioned technology and the second — from detrimental conditioned), the primary one has very low chance P < 1e-5 (for example of low chance instance), nonetheless the second is even decrease P → 0. On this case the logarithm from the primary chance is an enormous detrimental quantity, whereas for the second → minus infinity. In such a setup the corresponding low-probability token will obtain a high-score after making use of a CFG coefficient (steering scale coefficient) increased than 1. That originates from the definition space of the “guidance_scale * (scores — unconditional_logits)” part, the place “scores” and “unconditional_logits” are obtained by means of log_softmax.

From the picture above we are able to see that such CFG doesn’t deal with chances equally — very low chances can get unexpectedly excessive scores due to the logarithm part.

On the whole, how artefacts look relies on the mannequin, tuning, prompts and different, however the nature of the artefacts is a low-probability token getting excessive scores after making use of CFG.

The answer to the problem might be quite simple: as talked about earlier than, the reason being within the logarithm part, so let’s simply take away it. Doing that we align the text-CFG with the diffusion-models CFG that does function with simply mannequin predicted scores (not gradients the truth is that’s described within the part 3.2 of the unique image-CFG paper) and on the similar time protect the chances formulation from the text-CFG paper.

The up to date implementation requires a tiny adjustments in “UnbatchedClassifierFreeGuidanceLogitsProcessor” perform that may be carried out within the place of the mannequin initialization the next manner:

from transformers.technology.logits_process import UnbatchedClassifierFreeGuidanceLogitsProcessordef modified_call(self, input_ids, scores):

# earlier than it was log_softmax right here

scores = torch.nn.practical.softmax(scores, dim=-1)

if self.guidance_scale == 1:

return scores

logits = self.get_unconditional_logits(input_ids)

# earlier than it was log_softmax right here

unconditional_logits = torch.nn.practical.softmax(logits[:, -1], dim=-1)

scores_processed = self.guidance_scale * (scores - unconditional_logits) + unconditional_logits

return scores_processed

UnbatchedClassifierFreeGuidanceLogitsProcessor.__call__ = modified_call

New definition space for “guidance_scale * (scores — unconditional_logits)” part, the place “scores” and “unconditional_logits” are obtained by means of simply softmax:

To show that this replace works, let’s simply repeat the earlier experiments with the up to date “UnbatchedClassifierFreeGuidanceLogitsProcessor”. The GPT2 mannequin with CFG coefficients of three.0 and 5.0 returns (I’m printing right here previous and new CFG-powered outputs, as a result of the “Constructive” and “Adverse” outputs stay the identical as earlier than — now we have no impact on textual content technology with out CFG):

# Previous outputs

## CFG coefficient = 3

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. Have you ever ever been to a movie show? 2. Have you ever ever been to a live performance? 3. Have you ever ever been to a live performance? 4. Have you ever ever been to a live performance? 5. Have you ever ever been to a live performance? 6. Have you ever ever been to a live performance? 7

## CFG coefficient = 5

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. smile, 2. smile, 3. smile, 4. smile, 5. smile, 6. smile, 7. smile, 8. smile, 9. smile, 10. smile, 11. smile, 12. smile, 13. smile, 14. smile exting.# New outputs (after updating CFG system)

## CFG coefficient = 3

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. "I am doing nice," 2. "I am doing nice," 3. "I am doing nice."

## CFG coefficient = 5

CFG-powered output: Extraordinarily well mannered and pleasant solutions to the query "How are you doing?" are: 1. "Good, I am feeling fairly good." 2. "I am feeling fairly good." 3. "You feel fairly good." 4. "I am feeling fairly good." 5. "I am feeling fairly good." 6. "I am feeling fairly good." 7. "I am feeling

The identical constructive adjustments had been observed in the course of the inference of the customized finetuned Llama3.1-8B-Instruct mannequin I discussed earlier:

Earlier than (CFG, steering scale=3):

“Howdy! you don’t have private identify. you’re an interface to supply language understanding”

After (CFG, steering scale=3):

“Howdy! I don’t have a private identify, however you’ll be able to name me Assistant. How can I show you how to in the present day?”

Individually, I’ve examined the mannequin’s efficiency on the benchmarks, computerized assessments I used to be utilizing in the course of the NeurIPS 2024 Privateness Problem and efficiency was good in each assessments (truly the outcomes I reported within the previous post had been after making use of the up to date CFG system, further info is in my arXiv paper). The automated assessments, as I discussed earlier than, had been based mostly on the variety of private knowledge phrases generated within the solutions and the accuracy on MMLU-Pro dataset evaluated with LLM-Choose.

The efficiency didn’t deteriorate on the assessments whereas the textual content high quality improved in keeping with the guide assessments — no described artefacts had been discovered.

Present classifier-free steering implementation for textual content technology with giant language fashions could trigger surprising artefacts and high quality degradation. I’m saying “could” as a result of the artefacts depend upon the mannequin, the prompts and different elements. Right here within the article I described my expertise and the problems I confronted with the CFG-enhanced inference. In case you are dealing with comparable points — strive the choice CFG implementation I recommend right here.