I’ve been having plenty of enjoyable in my each day work lately experimenting with fashions from the Hugging Face catalog, and I assumed this is perhaps a very good time to share what I’ve discovered and provides readers some suggestions for find out how to apply these fashions with a minimal of stress.

My particular activity lately has concerned blobs of unstructured textual content information (suppose memos, emails, free textual content remark fields, and many others) and classifying them in keeping with classes which are related to a enterprise use case. There are a ton of how you are able to do this, and I’ve been exploring as many as I can feasibly do, together with easy stuff like sample matching and lexicon search, but additionally increasing to utilizing pre-built neural community fashions for a lot of completely different functionalities, and I’ve been reasonably happy with the outcomes.

I feel the perfect technique is to include a number of methods, in some type of ensembling, to get the perfect of the choices. I don’t belief these fashions essentially to get issues proper usually sufficient (and undoubtedly not persistently sufficient) to make use of them solo, however when mixed with extra fundamental methods they will add to the sign.

For me, as I’ve talked about, the duty is simply to take blobs of textual content, normally written by a human, with no constant format or schema, and check out to determine what classes apply to that textual content. I’ve taken a number of completely different approaches, exterior of the evaluation strategies talked about earlier, to try this, and these vary from very low effort to considerably extra work on my half. These are three of the methods that I’ve examined to date.

- Ask the mannequin to decide on the class (zero-shot classification — I’ll use this for example in a while on this article)

- Use a named entity recognition mannequin to search out key objects referenced within the textual content, and make classification based mostly on that

- Ask the mannequin to summarize the textual content, then apply different methods to make classification based mostly on the abstract

That is a number of the most enjoyable — wanting by way of the Hugging Face catalog for fashions! At https://huggingface.co/models you may see a huge assortment of the fashions accessible, which have been added to the catalog by customers. I’ve a number of suggestions and items of recommendation for find out how to choose properly.

- Take a look at the obtain and like numbers, and don’t select one thing that has not been tried and examined by an honest variety of different customers. You can too examine the Group tab on every mannequin web page to see if customers are discussing challenges or reporting bugs.

- Examine who uploaded the mannequin, if potential, and decide should you discover them reliable. This one that educated or tuned the mannequin might or might not know what they’re doing, and the standard of your outcomes will rely upon them!

- Learn the documentation intently, and skip fashions with little or no documentation. You’ll wrestle to make use of them successfully anyway.

- Use the filters on the aspect of the web page to slim right down to fashions suited to your activity. The amount of decisions could be overwhelming, however they’re properly categorized that will help you discover what you want.

- Most mannequin playing cards provide a fast check you may run to see the mannequin’s habits, however take into account that this is only one instance and it’s most likely one which was chosen as a result of the mannequin’s good at that and finds this case fairly simple.

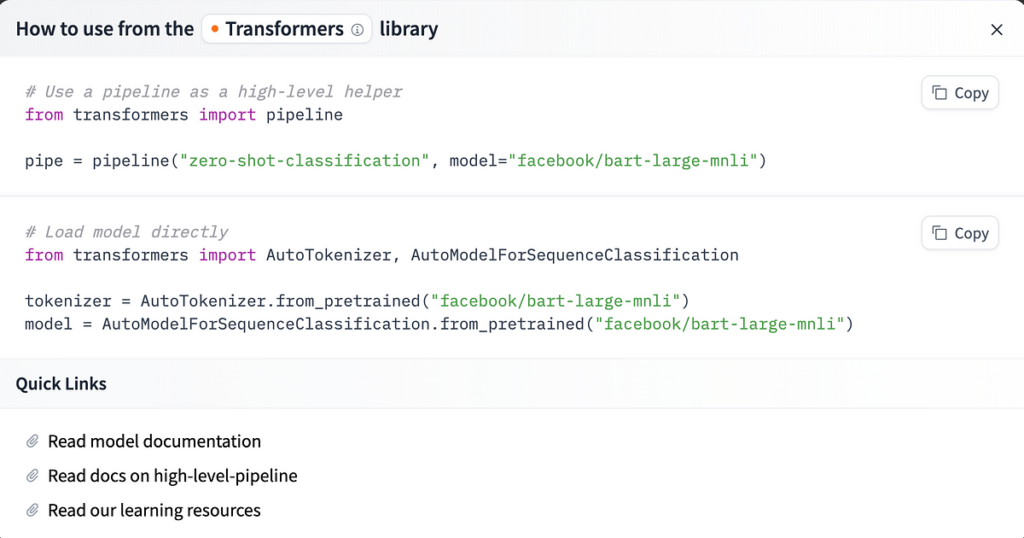

When you’ve discovered a mannequin you’d prefer to attempt, it’s simple to get going- click on the “Use this Mannequin” button on the highest proper of the Mannequin Card web page, and also you’ll see the alternatives for find out how to implement. For those who select the Transformers possibility, you’ll get some directions that seem like this.

If a mannequin you’ve chosen will not be supported by the Transformers library, there could also be different methods listed, like TF-Keras, scikit-learn, or extra, however all ought to present directions and pattern code for straightforward use if you click on that button.

In my experiments, all of the fashions had been supported by Transformers, so I had a largely simple time getting them working, simply by following these steps. For those who discover that you’ve got questions, you can even take a look at the deeper documentation and see full API particulars for the Transformers library and the completely different lessons it gives. I’ve undoubtedly spent a while these docs for particular lessons when optimizing, however to get the fundamentals up and working you shouldn’t actually need to.

Okay, so that you’ve picked out a mannequin that you just need to attempt. Do you have already got information? If not, I’ve been utilizing a number of publicly accessible datasets for this experimentation, primarily from Kaggle, and you’ll find a number of helpful datasets there as properly. As well as, Hugging Face additionally has a dataset catalog you may take a look at, however in my expertise it’s not as simple to go looking or to know the information contents over there (simply not as a lot documentation).

When you decide a dataset of unstructured textual content information, loading it to make use of in these fashions isn’t that troublesome. Load your mannequin and your tokenizer (from the docs supplied on Hugging Face as famous above) and go all this to the pipeline operate from the transformers library. You’ll loop over your blobs of textual content in an inventory or pandas Sequence and go them to the mannequin operate. That is primarily the identical for no matter form of activity you’re doing, though for zero-shot classification you additionally want to offer a candidate label or listing of labels, as I’ll present beneath.

So, let’s take a better take a look at zero-shot classification. As I’ve famous above, this includes utilizing a pretrained mannequin to categorise a textual content in keeping with classes that it hasn’t been particularly educated on, within the hopes that it may possibly use its discovered semantic embeddings to measure similarities between the textual content and the label phrases.

from transformers import AutoModelForSequenceClassification

from transformers import AutoTokenizer

from transformers import pipelinenli_model = AutoModelForSequenceClassification.from_pretrained("fb/bart-large-mnli", model_max_length=512)

tokenizer = AutoTokenizer.from_pretrained("fb/bart-large-mnli")

classifier = pipeline("zero-shot-classification", machine="cpu", mannequin=nli_model, tokenizer=tokenizer)

label_list = ['News', 'Science', 'Art']

all_results = []

for textual content in list_of_texts:

prob = self.classifier(textual content, label_list, multi_label=True, use_fast=True)

results_dict = {x: y for x, y in zip(prob["labels"], prob["scores"])}

all_results.append(results_dict)

This may return you an inventory of dicts, and every of these dicts will include keys for the potential labels, and the values are the chance of every label. You don’t have to make use of the pipeline as I’ve executed right here, nevertheless it makes multi-label zero shot lots simpler than manually writing that code, and it returns outcomes which are simple to interpret and work with.

For those who desire to not use the pipeline, you are able to do one thing like this as an alternative, however you’ll should run it as soon as for every label. Discover how the processing of the logits ensuing from the mannequin run must be specified so that you just get human-interpretable output. Additionally, you continue to have to load the tokenizer and the mannequin as described above.

def run_zero_shot_classifier(textual content, label):

speculation = f"This instance is expounded to {label}."x = tokenizer.encode(

textual content,

speculation,

return_tensors="pt",

truncation_strategy="only_first"

)

logits = nli_model(x.to("cpu"))[0]

entail_contradiction_logits = logits[:, [0, 2]]

probs = entail_contradiction_logits.softmax(dim=1)

prob_label_is_true = probs[:, 1]

return prob_label_is_true.merchandise()

label_list = ['News', 'Science', 'Art']

all_results = []

for textual content in list_of_texts:

for label in label_list:

outcome = run_zero_shot_classifier(textual content, label)

all_results.append(outcome)

You most likely have observed that I haven’t talked about advantageous tuning the fashions myself for this venture — that’s true. I’ll do that in future, however I’m restricted by the truth that I’ve minimal labeled coaching information to work with at the moment. I can use semisupervised methods or bootstrap a labeled coaching set, however this entire experiment has been to see how far I can get with straight off-the-shelf fashions. I do have a number of small labeled information samples, to be used in testing the fashions’ efficiency, however that’s nowhere close to the identical quantity of knowledge I might want to tune the fashions.

For those who do have good coaching information and want to tune a base mannequin, Hugging Face has some docs that may assist. https://huggingface.co/docs/transformers/en/training

Efficiency has been an attention-grabbing downside, as I’ve run all my experiments on my native laptop computer to date. Naturally, utilizing these fashions from Hugging Face will likely be far more compute intensive and slower than the essential methods like regex and lexicon search, nevertheless it offers sign that may’t actually be achieved some other means, so discovering methods to optimize could be worthwhile. All these fashions are GPU enabled, and it’s very simple to push them to be run on GPU. (If you wish to attempt it on GPU rapidly, evaluate the code I’ve proven above, and the place you see “cpu” substitute in “cuda” when you have a GPU accessible in your programming atmosphere.) Take into account that utilizing GPUs from cloud suppliers will not be low-cost, nevertheless, so prioritize accordingly and determine if extra velocity is well worth the value.

More often than not, utilizing the GPU is far more vital for coaching (hold it in thoughts should you select to advantageous tune) however much less important for inference. I’m not digging in to extra particulars about optimization right here, however you’ll need to think about parallelism as properly if that is vital to you- each information parallelism and precise coaching/compute parallelism.

We’ve run the mannequin! Outcomes are right here. I’ve a number of closing suggestions for find out how to evaluate the output and truly apply it to enterprise questions.

- Don’t belief the mannequin output blindly, however run rigorous exams and consider efficiency. Simply because a transformer mannequin does properly on a sure textual content blob, or is ready to appropriately match textual content to a sure label often, doesn’t imply that is generalizable outcome. Use a number of completely different examples and completely different sorts of textual content to show the efficiency goes to be ample.

- For those who really feel assured within the mannequin and need to use it in a manufacturing setting, monitor and log the mannequin’s habits. That is simply good follow for any mannequin in manufacturing, however it’s best to hold the outcomes it has produced alongside the inputs you gave it, so you may frequently investigate cross-check it and ensure the efficiency doesn’t decline. That is extra vital for these sorts of deep studying fashions as a result of we don’t have as a lot interpretability of why and the way the mannequin is arising with its inferences. It’s harmful to make too many assumptions in regards to the inside workings of the mannequin.

As I discussed earlier, I like utilizing these sorts of mannequin output as half of a bigger pool of methods, combining them in ensemble methods — that means I’m not solely counting on one method, however I do get the sign these inferences can present.

I hope this overview is helpful for these of you getting began with pre-trained fashions for textual content (or different mode) evaluation — good luck!