Getty Photos

Getty PhotosIt is the perennial “cocktail occasion drawback” – standing in a room full of individuals, drink in hand, making an attempt to listen to what your fellow visitor is saying.

In actual fact, human beings are remarkably adept at holding a dialog with one particular person whereas filtering out competing voices.

Nonetheless, maybe surprisingly, it is a ability that know-how has till not too long ago been unable to duplicate.

And that issues with regards to utilizing audio proof in courtroom instances. Voices within the background could make it laborious to make certain who’s talking and what’s being stated, probably making recordings ineffective.

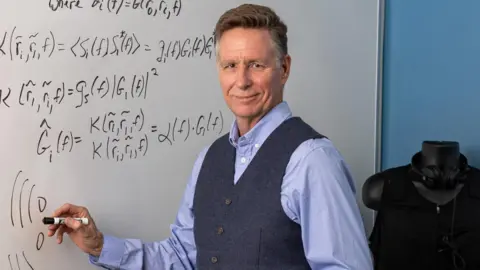

Electrical engineer Keith McElveen, founder and chief know-how officer of Wave Sciences, turned excited about the issue when he was working for the US authorities on a conflict crimes case.

“What we had been making an attempt to determine was who ordered the bloodbath of civilians. Among the proof included recordings with a bunch of voices all speaking directly – and that is once I realized what the “cocktail occasion drawback” was,” he says.

“I had been profitable in eradicating noise like vehicle sounds or air conditioners or followers from speech, however once I began making an attempt to take away speech from speech, it turned out not solely to be a really troublesome drawback, it was one of many traditional laborious issues in acoustics.

“Sounds are bouncing spherical a room, and it’s mathematically horrible to unravel.”

Paul Cheney

Paul CheneyThe reply, he says, was to make use of AI to attempt to pinpoint and display out all competing sounds primarily based on the place they initially got here from in a room.

This does not simply imply different individuals who could also be talking – there’s additionally a big quantity of interference from the way in which sounds are mirrored round a room, with the goal speaker’s voice being heard each straight and not directly.

In an ideal anechoic chamber – one completely free from echoes – one microphone per speaker can be sufficient to select up what everybody was saying; however in an actual room, the issue requires a microphone for each mirrored sound too.

Mr McElveen based Wave Sciences in 2009, hoping to develop a know-how which may separate overlapping voices. Initially the agency used massive numbers of microphones in what’s often called array beamforming.

Nonetheless, suggestions from potential business companions was that the system required too many microphones for the associated fee concerned to provide good ends in many conditions – and would not carry out in any respect in lots of others.

“The frequent chorus was that if we may give you an answer that addressed these issues, they’d be very ,” says Mr McElveen.

And, he provides: “We knew there needed to be an answer, as a result of you are able to do it with simply two ears.”

The corporate lastly solved the issue after 10 years of internally funded analysis and filed a patent software in September 2019.

Keith McElveen

Keith McElveenWhat that they had give you was an AI that may analyse how sound bounces round a room earlier than reaching the microphone or ear.

“We catch the sound because it arrives at every microphone, backtrack to determine the place it got here from, after which, in essence, we suppress any sound that could not have come from the place the particular person is sitting,” says Mr McElveen.

The impact is comparable in sure respects to when a digicam focusses on one topic and blurs out the foreground and background.

“The outcomes don’t sound crystal clear when you possibly can solely use a really noisy recording to study from, however they’re nonetheless gorgeous.”

The know-how had its first real-world forensic use in a US homicide case, the place the proof it was capable of present proved central to the convictions.

After two hitmen had been arrested for killing a person, the FBI needed to show that they’d been employed by a household going by means of a toddler custody dispute. The FBI organized to trick the household into believing that they had been being blackmailed for his or her involvement – after which sat again to see the response.

Whereas texts and telephone calls had been moderately simple for the FBI to entry, in-person conferences in two eating places had been a distinct matter. However the courtroom authorised using Wave Sciences’ algorithm, that means that the audio went from being inadmissible to a pivotal piece of proof.

Since then, different authorities laboratories, together with within the UK, have put it by means of a battery of checks. The corporate is now advertising and marketing the know-how to the US navy, which has used it to analyse sonar alerts.

It may even have functions in hostage negotiations and suicide situations, says Mr McElveen, to ensure each side of a dialog could be heard – not simply the negotiator with a megaphone.

Late final 12 months, the corporate launched a software program software utilizing its studying algorithm to be used by authorities labs performing audio forensics and acoustic evaluation.

Getty Photos

Getty PhotosFinally it goals to introduce tailor-made variations of its product to be used in audio recording package, voice interfaces for vehicles, sensible audio system, augmented and digital actuality, sonar and listening to help units.

So, for instance, if you happen to converse to your automotive or sensible speaker it would not matter if there was a variety of noise occurring round you, the machine would nonetheless have the ability to make out what you had been saying.

AI is already being utilized in different areas of forensics too, in line with forensic educator Terri Armenta of the Forensic Science Academy.

“ML [machine learning] fashions analyse voice patterns to find out the identification of audio system, a course of significantly helpful in prison investigations the place voice proof must be authenticated,” she says.

“Moreover, AI instruments can detect manipulations or alterations in audio recordings, guaranteeing the integrity of proof introduced in courtroom.”

And AI has additionally been making its means into different elements of audio evaluation too.

Bosch

BoschBosch has a know-how known as SoundSee, that makes use of audio sign processing algorithms to analyse, for example, a motor’s sound to foretell a malfunction earlier than it occurs.

“Conventional audio sign processing capabilities lack the flexibility to know sound the way in which we people do,” says Dr Samarjit Das, director of analysis and know-how at Bosch USA.

“Audio AI allows deeper understanding and semantic interpretation of the sound of issues round us higher than ever earlier than – for instance, environmental sounds or sound cues emanating from machines.”

Newer checks of the Wave Sciences algorithm have proven that, even with simply two microphones, the know-how can carry out in addition to the human ear – higher, when extra microphones are added.

And so they additionally revealed one thing else.

“The mathematics in all our checks exhibits exceptional similarities with human listening to. There’s little oddities about what our algorithm can do, and the way precisely it might probably do it, which can be astonishingly much like a few of the oddities that exist in human listening to,” says McElveen.

“We suspect that the human mind could also be utilizing the identical math – that in fixing the cocktail occasion drawback, we could have stumbled upon what’s actually occurring within the mind.”