Illustration Fintuning — Past the PEFT Strategies for fine-tuning LLMs

Hasn’t everybody began utilizing ReFT but?

Stanford printed the paper ReFT: Representation finetuning for language models in Might 2024, which instantly confirmed its nice potential. In July 2024, Oxen.ai presented an experiment finetuning Llama3 (8B) on a single Nvidia A10 GPU inside 14 minutes, additional demonstrating this system's energy.

Not like SOTA PEFT strategies, which deal with modifying the mannequin weights or enter, the ReFT method relies on a beforehand proposed distributed interchange intervention (DII) technique. The DII technique first tasks the embedding from the deep studying mannequin to a decrease dimension subspace after which interferes by means of the subspace for fine-tuning functions.

Within the following, we’ll first stroll the readers by means of SOTA fine-tuning PEFT algorithms similar to LoRA, immediate tuning, and prefix tuning; then we’ll focus on the unique DII technique to supply a greater context for understanding; lastly, we’ll focus on the ReFT method and current the outcomes from the paper.

PEFT — Parameter Environment friendly Finetuning Strategies

Hugging Face has a blog detailing different PEFT techniques for fine-tuning LLMs. Right here, we rapidly recap these strategies.

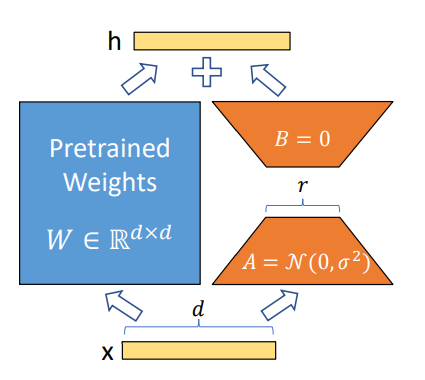

Proposed in 2021, LoRA has turn into some of the profitable strategies for fine-tuning LLMs and diffusion fashions (e.g., Time-varying LoRA) on account of its simplicity and generalization means. The concept is straightforward: as a substitute of fine-tuning the unique weight parameters for every layer, the LoRA method provides two low-rank matrices and solely finetunes the low-rank matrices. The trainable parameters may very well be decreased to lower than 0.3% throughout fine-tuning of the entire community, which considerably accelerates the educational course of and minimizes the GPU reminiscence.

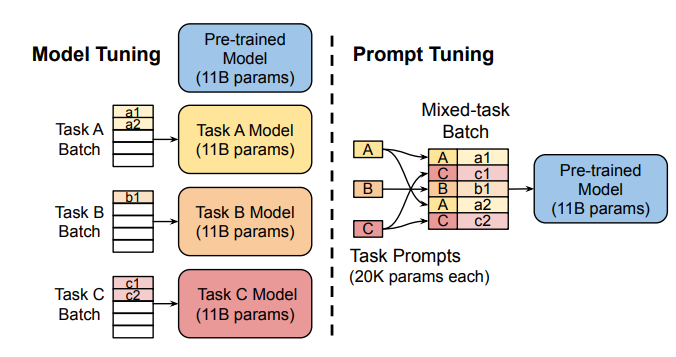

As a substitute of adjusting the pre-trained mannequin’s inside layers, the Immediate Tuning method proposed to make use of “delicate prompts,” a learnable task-specific immediate embedding as a prefix. Given mixed-task batch prompts, the mannequin may effectively carry out multi-task prediction with out further task-specific mannequin copy (as towards the Mannequin Tuning within the following left sub-figure).

To offer universality for immediate tuning fashions at scales (e.g., over 10B parameters), Prefix Tuning (P-Tuning v2) proposed to prefix trainable immediate embeddings at totally different layers, which permits studying task-specific data at numerous scales.

Amongst all these PEFT strategies, LoRA is probably the most broadly utilized in fine-tuning LLMs for its robustness and effectivity. An in depth empirical evaluation might be discovered on this paper.

Distributed Interchange Intervention (DII)

Causal abstraction is a sturdy synthetic intelligence framework that makes use of the intervention between a causal mannequin (a high-level mannequin) and a neural community mannequin (or a low-level mannequin) to induce alignment estimation. If there exists an alignment between the 2 fashions, we all know the underlying mechanisms between the causal mannequin and the NN are the identical. The method of discovering the underlying alignment by intervention known as interchange intervention (II), which is intuitively defined on this lecture video.

Nonetheless, classical causal abstraction makes use of brute pressure to look by means of all attainable alignments of mannequin states, which is much less optimum. A Distributed Interchange Intervention (DII) system first tasks high-level and low-level fashions to sub-spaces by means of a sequence of orthogonal projections after which produces an intervened mannequin utilizing sure rotation operations. An interesting intervention experiment on imaginative and prescient fashions might be discovered here.

Extra particularly, the DII may very well be written as the next:

The place R is a low-rank matrix with orthogonal rows, indicating orthogonal projections; b and s are two totally different representations encoded by the mannequin from two totally different inputs; the intervention will occur on the low-rank area, e.g., the area that accommodates Rs and Rb; the projection matrix R shall be additional learnt by distributed alignment search (DAS), which optimizes in the direction of “the subspace that would maximize the probability of expected counterfactual output after intervention.”

ReFT — Illustration Fintuning

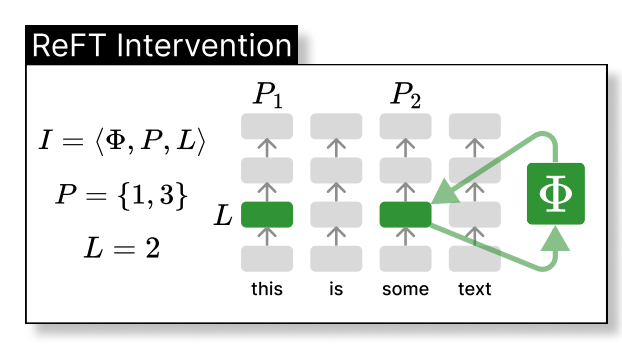

Thus, the ReFT method may very well be seen because the intervention of the mannequin's hidden illustration in a decrease dimension area, as illustrated under, the place phi is the intervention and instantly utilized to the hidden illustration at layer L and place P:

Particularly, the paper additional proposes a Low-rank Linear Subspace Reft (LoReFT), which additional introduces a learnt projected supply:

The place h is the hidden illustration, (Rs = Wh + b) is the learnt protected supply, which edits the illustration h within the projected low-dimension area spanned by R. Now, we are able to illustrate the LoReFT within the authentic deep neural community layer under.

When fine-tuning on an LLM, the parameters of the LM are stored frozen whereas solely the parameters of the projection phi={R, W, b} are skilled.

Experiments

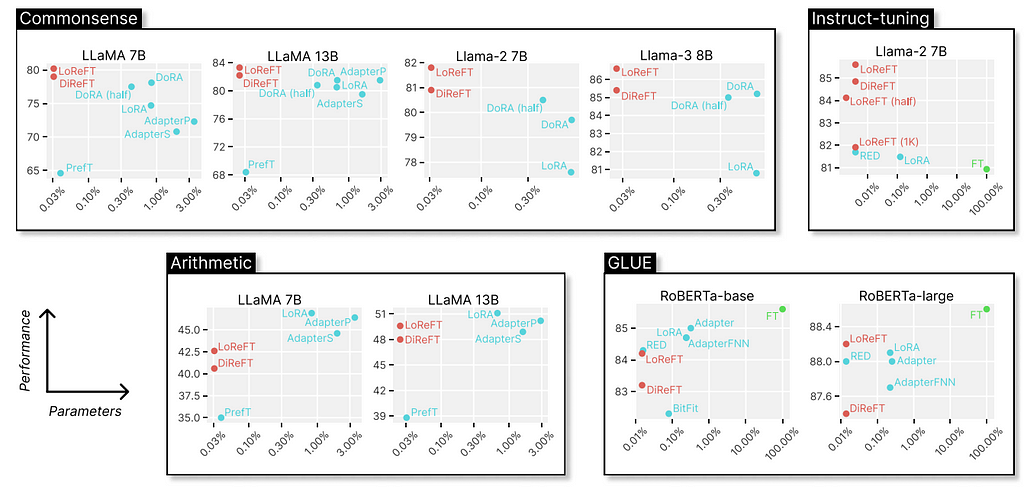

The unique paper exhibits experiments evaluating the LoReFT (and different strategies from the ReFT household) to full fine-tuning (FT), LoRA, Prefix-tuning, and many others., on 4 sorts of benchmarks: common sense reasoning, arithmetic reasoning, instruction following, and pure language understanding. We are able to see that, in comparison with LoRA, the ReFT strategies additional scale back the parameters by not less than 90% whereas attaining greater efficiency by a big margin.

Discussions

Why is ReFT so fascinating? Firstly, the method gives convincing outcomes with Llama-family fashions on numerous benchmarks outperforming the SOTA fine-tuning strategies. Secondly, the method is deeply rooted within the causal abstraction algorithm, which presents additional floor for mannequin interpretation, particularly from the hidden illustration’s perspective. As talked about within the authentic paper, ReFT exhibits that “a linear subspace distributed throughout a set of neurons can obtain generalized management over an unlimited variety of duties,” which could additional open doorways for serving to us higher perceive giant language fashions.

References

- Wu Z, Arora A, Wang Z, Geiger A, Jurafsky D, Manning CD, Potts C. Reft: Illustration finetuning for language fashions. arXiv preprint arXiv:2404.03592. 2024 Apr 4.

- Hu EJ, Shen Y, Wallis P, Allen-Zhu Z, Li Y, Wang S, Wang L, Chen W. Lora: Low-rank adaptation of enormous language fashions. arXiv preprint arXiv:2106.09685. 2021 Jun 17.

- Zhuang Z, Zhang Y, Wang X, Lu J, Wei Y, Zhang Y. Time-Various LoRA: In direction of Efficient Cross-Area Wonderful-Tuning of Diffusion Fashions. In The Thirty-eighth Annual Convention on Neural Info Processing Programs 2024.

- Liu X, Ji Ok, Fu Y, Tam WL, Du Z, Yang Z, Tang J. P-tuning v2: Immediate tuning might be corresponding to fine-tuning universally throughout scales and duties. arXiv preprint arXiv:2110.07602. 2021 Oct 14.

- Geiger A, Wu Z, Potts C, Icard T, Goodman N. Discovering alignments between interpretable causal variables and distributed neural representations. InCausal Studying and Reasoning 2024 Mar 15 (pp. 160–187). PMLR.

- Lester B, Al-Rfou R, Fixed N. The ability of scale for parameter-efficient immediate tuning. arXiv preprint arXiv:2104.08691. 2021 Apr 18.

- Pu G, Jain A, Yin J, Kaplan R. Empirical evaluation of the strengths and weaknesses of PEFT strategies for LLMs. arXiv preprint arXiv:2304.14999. 2023 Apr 28.

Is ReFT All We Needed? was initially printed in Towards Data Science on Medium, the place individuals are persevering with the dialog by highlighting and responding to this story.